ProofRun | Proof-of-Work Hiring

ProofRun lets companies turn real backlog work into mini missions so candidates can prove their resourcefulness and artificial intelligence (AI)-native skills, earn real performance metrics on their portfolio, and flow directly into a recruiter's talent pipeline.

Instead of asking candidates to narrate why they might be good, ProofRun lets them show it on bounded, real work. A founder gets backlog leverage, a candidate gets verified proof, and the hiring manager gets a much higher-signal filter than a resume, degree, or generic take-home.

Modern AI can already automate or significantly speed up roughly 40 to 60 percent of tasks in many knowledge roles. Since late 2022, workers aged 22 to 25 in the most AI-exposed occupations have seen roughly a 15 to 16 percent relative employment decline, while older workers in the same occupations were broadly stable.

Meanwhile, only about half of bachelor's degree graduates secure a college-level job within a year of graduation. AI raised the bar. The on-ramps for new talent did not.

It is now cheaper to train an AI on junior work

than to train a junior on the job.

Companies still cannot see who is worth betting on.

Company Side

Repetitive early-career tasks in marketing, sales ops, support, and basic analysis are the easiest to hand to AI, so those roles vanish first.

What remains for humans are high-context decisions: choosing angles, handling edge cases, designing experiments, and telling AI what to do in the context of a specific funnel, product, and brand.

Hiring stayed stuck on degrees, past titles, resume keyword filters, and generic take-homes. None of that shows whether someone can use modern tools to move ROAS, CTR, CPA, search rankings, reply rate, activation, or revenue on this business.

Candidate Side

Most candidates are not building projects. They are blasting the same resume into dozens of roles and disappearing into filtering systems. Their experience reads as academic or unrelated. It is not obvious how any of it maps to the company's metrics.

The minority who do build side projects are usually doing it in a vacuum. No real ad spend. No live traffic. No sales pipeline. No actual users. For a hiring manager, those projects look like homework, not proof.

So: Companies can increasingly get "junior level output" from AI plus a few senior operators.

Candidates have almost no structured way to touch real problems, get real feedback, and build a track record that hiring managers actually trust.

That is the gap ProofRun exists to close.

Convert real company work into scoped missions.

Catchline: Hiring becomes proof-of-work.

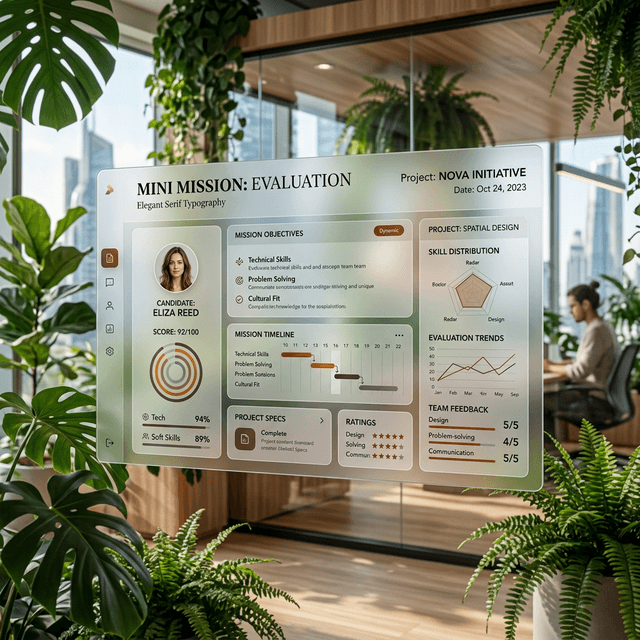

1. Mini Missions

Carved from real backlog. Includes project spec, ICP, brand guidelines, and historical data.

2. Taste Check

Optional 10-20 min prescreen. Pick best ads, rewrite a CTA, or compare UX flows to filter for seriousness.

3. Orchestrate & Ship

Candidates opt in, use any AI tools, and ship. Success depends on judgment plus AI orchestration.

4. Tie to Metrics

Top work touches production. ProofRun pulls outcomes: ROAS, CPA, activation lift, or reply rate.

Mentorship and Portfolios

Mentorship on demand: Candidates can pay for line-by-line feedback from vetted mentors or from the company itself. The loop becomes: do work, get scored, get critique, repeat.

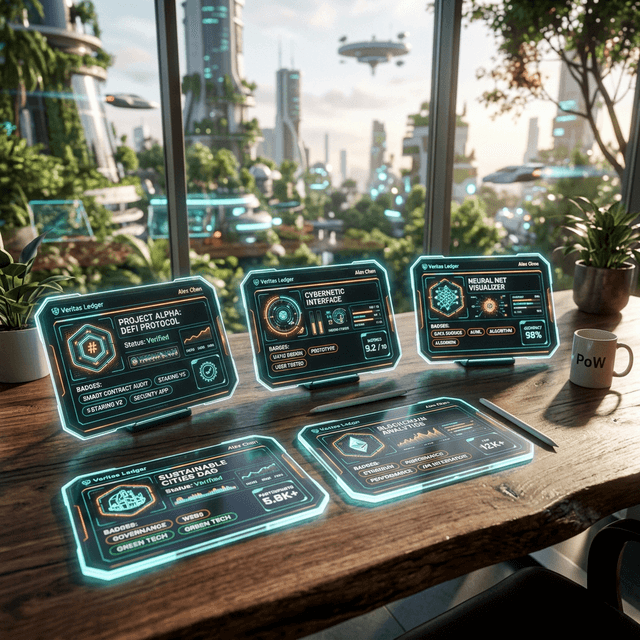

Unified Pipeline: Each completed mission becomes a card showing company, role type, difficulty, rubric score, and attached metrics. Recruiters search across these cards and pull candidates directly from the artifacts.

Live Business Workflows.

1. Growth & Creative (Seed/Series A)

Mission: Paid social and content for a B2B SaaS startup wanting better paid acquisition.

Mission Spec

Propose 3 concepts for Meta/LinkedIn. Outline a 7-day test plan. Top candidates get $100-$300 real budget in a sandbox to launch, monitor, and adjust. Postmortem reporting required.

Outputs

Startup gets new creative. Candidate gets a portfolio card: '2.3x ROAS vs control on $200 spend; CPA down 32%'

2. UI/UX (Product-heavy teams)

Mission: Fix an onboarding flow losing users in the first session for a Series A consumer app.

Mission Spec

Record a 10-15 minute Loom walkthrough analyzing friction points. Suggest UX/copy changes using AI. Top candidates get Figma access to ship an updated flow with an experiment design.

Outputs

If A/B tested, ProofRun attaches the result: 'Selected for test; +8.5 percentage point activation over 30 days.'

3. Product Management (Founder teams)

Mission: Roadmap decision and PRD for a growth-stage startup.

Mission Spec

Write a 2-page decision memo choosing a roadmap and justifying it using user feedback (NPS comments, analytics). Top candidates receive a real feature request and write a PRD and AI-orchestration plan.

Outputs

If the feature ships, links PRD to post-launch impact: 'Used as base spec; activation +4%; no change in churn.'

4. Analyst Missions (Venture/Firms)

Mission: Find non-obvious, correct theses based on historical financials and data.

Mission Spec

Write a 1-2 page memo with a clear thesis, key drivers, major risks, 3 scenarios, and explicit triggers that would change their mind based on curated inputs.

Outputs

If the firm takes a position, ProofRun timestamps the mission and tracks relative performance vs benchmark.

Neglectedness

Market Dynamics

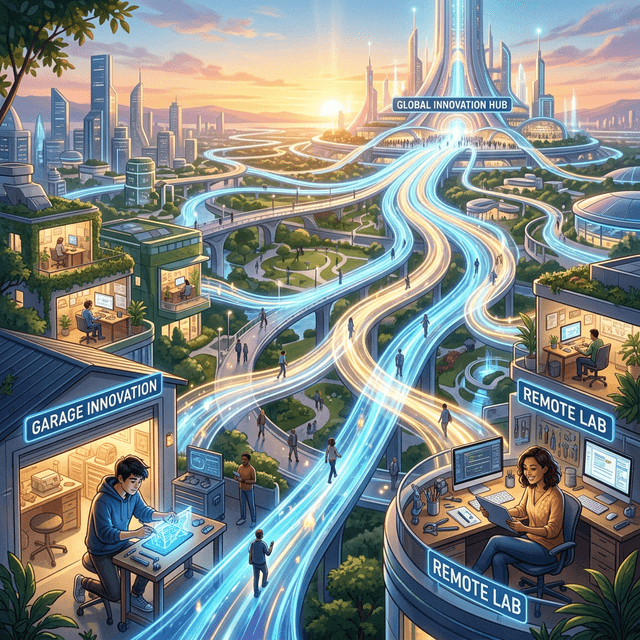

Three big systems are misaligned: Early talent need a way to buy into trajectories with artifacts rather than credentials. Hiring software treats static resumes as objects instead of measuring tool-assisted capability. Remote culture normalized async work, but not structured hiring missions.

First principles: when experimentation and automation get cheaper, it becomes rational to let outsiders touch real work in small, well-bounded ways.

Why Now

AI is already embedded. Tools built on large language models are changing workflows. Entry-level roles in AI-exposed work are shrinking fastest.

Founders already share dashboards and experiments in public. Turning a backlog item into a public mission is a small extension. The friction is no longer scientific; it is product design, trust, and distribution.

Business Model

- Startups: Pay to publish missions, per hire originating from missions, and for private assessment workspaces.

- Recruiters: Pay for candidate graph access, filters by outcome metrics, and ATS integrations.

- Candidates: Free core platform. Paid layer for structured mentorship, critique, and deep analytics.

Startup Wedge

Seed & Series A startups are overwhelmed by low-signal funnels. One strong growth or product hire changes trajectory immediately. They get cheap/free exploratory work out of the backlog, high signal on AI usage, and brand exposure as "build-in-public."

Moat and Defensibility.

Difficulty to Bring to Market

Buildable now, but trust, cold start, and legal design are harder than the software.

Moat Potential

The thin version of this business has weak defensibility. The compounding version has real teeth if it becomes the ledger for human-plus-AI performance.

The verifiable history of

turning capability into results.

In an AGI world where raw capability is nearly free, the durable edge is trust, built from repeated, measurable performance in real environments.

As AI systems improve, three human levers stay scarce: Taste about what "good" looks like, Context about users and constraints, and Trust. ProofRun is built to measure those levers over time.

Roadmap: Build a mission graph predicting highest leverage tasks. Extend to human-plus-agent orchestration, where candidates supervise fleets of agents, and the platform tracks who repeatedly makes strong calls.

Civilizational Impact.

Left alone, AI chews through the apprentice work where people used to learn judgment. That is how you produce a generation with tool access but without enough real reps in judgment, prioritization, and ownership.

ProofRun pushes the system toward a better equilibrium: Real problems become structured chances for ambitious people to practice and get paid. AI literacy develops in live workflows. Founders get a rational reason to carve off meaningful chunks of work instead of pushing everything to agents.

At scale, this recreates the apprenticeship layer that the AI transition is currently eroding.

64Impact Score

Key Performance Indicators

- ✓ Active companies posting monthly

- ✓ Mission completion rate

- ✓ Percentage of missions leading to interviews

- ✓ Median time from mission post to shortlist

- ✓ Candidate portfolio public visibility rate

First Experiment

Hypothesis: Seed and Series A startups will replace or augment take-home tests with Mini Missions, producing real hires and contracts.

Test: Onboard 10-15 startups. Launch 1 mission per company. Bring in 200-300 candidates. In 90 days, check: 30% of missions lead to an interview, 10 actual hires/contracts occur, and 70% of hired candidates make their metrics public.

Transferable Insight

Turn the backlog into a robust trust API.

In a world of abundant AI capability, credentialism collapses. The only unfakeable signal left is the deterministic execution of live, scoped tasks. The lever for builders is shifting systems away from historical proxies and instead wrapping real-world production outputs into verifiable, public data objects to scale trust.

Acronyms & References

Acronyms

References

Valuation Forecast

Probability that the category leader in this space reaches at least each valuation threshold.

AI Rationale

Allowing companies to turn real backlog work into mini-missions for candidates targets the core bottleneck of tech recruiting. The solution is highly viable but faces intense competition from incumbent applicant tracking systems. The AGI Futures forecaster model projects a historically well-paved path to a $1B+ profitable exit, leading to strong mid-tier probability density.

Implied Valuation Distribution (2030)

While the chart below displays cumulative probability, these boxes break down the exact probability of landing specifically within each valuation band.

Builder Proof-of-Work

Community submitted artifacts, notes, and implementations for this idea.